As you do from time to time, I was thinking about what leadership looks like. After a full-on couple of weeks I was catching up on work this week. I had to pull a few things together and it was a lot easier because the direction I wanted to go in was clear and I… Continue reading Leadership as setting the stage

Category: Observations

Agile is a team sport

I am a good team player, but ... If you work in a team, you need to be a team player. Otherwise what is the point of a being in a team? Having said that, I have worked in two very different types of teams where, I think, being a team player means something different:… Continue reading Agile is a team sport

The retro I didn’t run – Predictability

One of the paradoxes of working in an agile way is that it should create a predictable rhythm (or cadence) but it is actually best suited to tackling rapid change and great uncertainty. Predictable work in the face of change and uncertainty sounds like an odd thing to aim for, which means that some agile… Continue reading The retro I didn’t run – Predictability

Being mindful of values

I just read a book on "Mindful Leadership". I like to read books on mindfulness, leadership, presence, and similar topics because I usually have one of two reactions. Sometimes I feel a rush or Self-Affirming outrage. I almost want to yell at the pages of the book when I spot egregious errors or jingoistic regurgitation of motherhood… Continue reading Being mindful of values

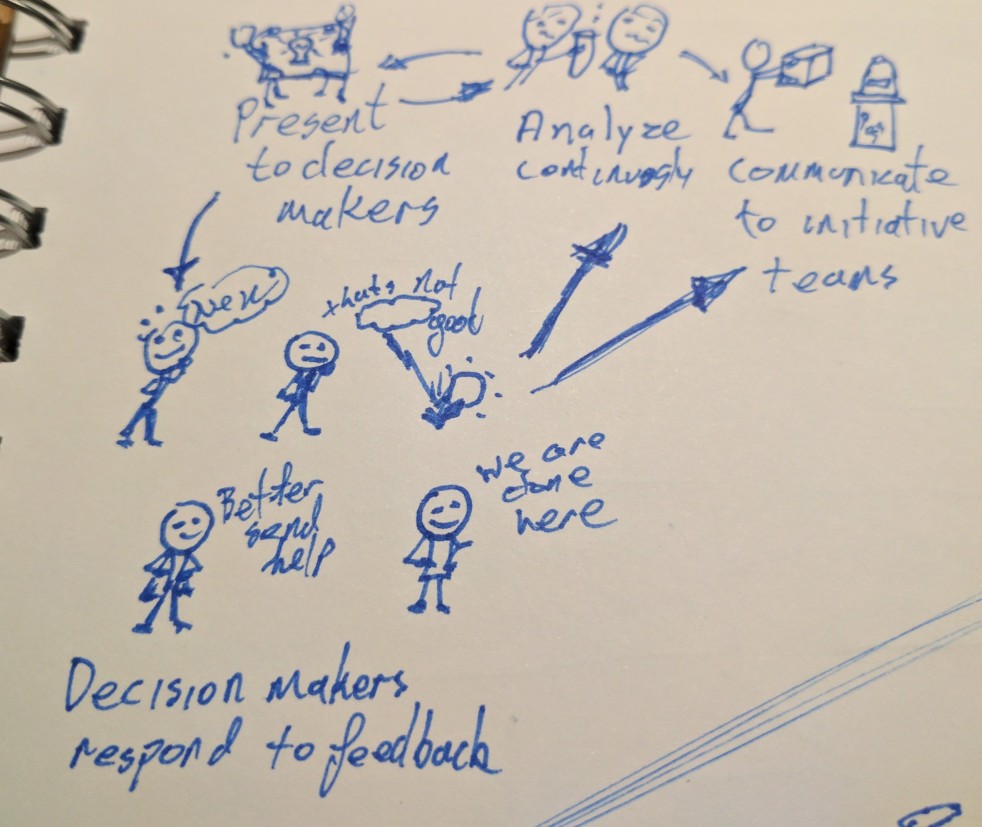

Quick comments on decision cycle time

Coming into the Christmas break, I am trying to close off as much work as possible. I want to leave the office with a feeling that I am done with the year. Hopefully, I can then come back ready to start a fresh year, without much "half-done" work to complete. But going through my outstanding work… Continue reading Quick comments on decision cycle time

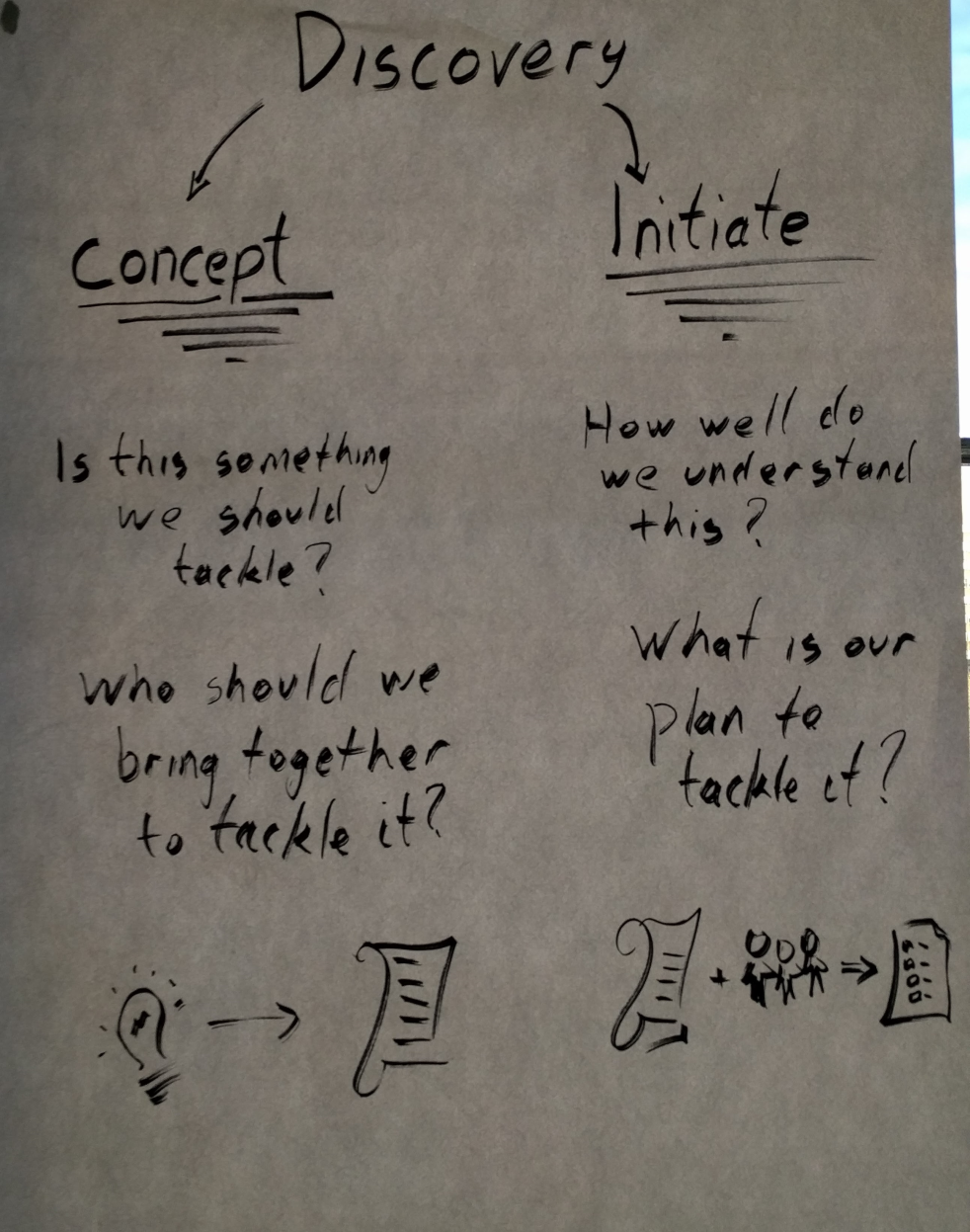

Should discovery work have milestones?

Product people often talk about doing continuous customer discovery, which is great. Engineering teams also talk about wanting to do some discovery before building something complex, which is great. But next time someone says they need to "do some discovery", ask them what they mean by the word discovery. I worry that people are using the… Continue reading Should discovery work have milestones?

The power of ignorant testers

I have always been amazed at the ability of some people to very quickly find a gap in logic, spot a customer experience issue or discover an "unintended consequence" of doing something that is otherwise good. I don't think this comes from specific subject matter expertise, but rather a combination of mindset, presence and a… Continue reading The power of ignorant testers

On leadership surveys

When I was starting out at work there was a huge culture of "leader as coach" and "leadership at every level". We were all expected to be learning from senior team members and to be coaching and leading each other. Looking back I think it was a privilege to be part of a culture that… Continue reading On leadership surveys

This is how I assess development goals

This is a long read for a blog article. I will discuss trees, effective assessment of development goals and other topics I find interesting. I hope also to answer the simple question "how do I assess development goals?" Looking at trees When you see a magnificent tree in the forest, it is obvious that the… Continue reading This is how I assess development goals

Managers and dev plans

Ongoing growth should be an essential part of every artisan or professional's work. Our careers and our personal development are in our own hands now. More than that, I believe that as knowledge workers, we have an obligation to evolve as fast as changes in our profession are happening. To this end, many companies have… Continue reading Managers and dev plans