I have been assessing some teams recently, in order to diagnose where they can create further improvements in their performance. This assessment will be valuable in helping the team decide where to focus their attention in continuing their growth.

Really though? Is both those statements really true? I guess the following needs to be true for the assessment to be worthy of their consideration:

- I am doing some assessment of some kind;

- That assessment is designed for the team to use in improving their performance; and

- The assessment is good.

Of course, whether the team actually use the assessment and whether the usage of the assessment leads to improvements are still not decided, even if the above statements are true. I won’t look at these last points yet though, because these are about change management and I should only worry about the team making use of the assessment if it is actually good.

Assessment 1 – Am I doing some assessment?

There may be reasons to believe that the world is an illusion or that I am a butterfly dreaming that I am an agile coach doing assessments. Maybe soon I will wake up flapping my wings around and eating flowers, thinking to myself:

Wouldn’t it be lovely to be in a world where I really was a human, assessing team performance.

Alas that was just a dream. Back to the daily grind of fluttering about looking for nice flowers to land on so I can enjoy their nectar.

A butterfly, waking from a dream about being an agile coach

OK – let’s start by creating some assumptions. Let’s take it as a given that the team and I exist and that I have done something I called an assessment.

Even then – the range of assessments I could do is probably huge.

So let’s confirm that I have also determined the scope of what I am assessing, which I have.

If, for example, I didn’t know whether the team has a clear purpose and a decent level of respect for each other, then I might be wasting my time assessing the frequency of meetings the team has, or the alleged velocity in previous sprints. Similarly, If I already know the team is an established one, with a clear purpose and team members who respect each other, then there seems little point in assessing these things, since I already know what the result will be/

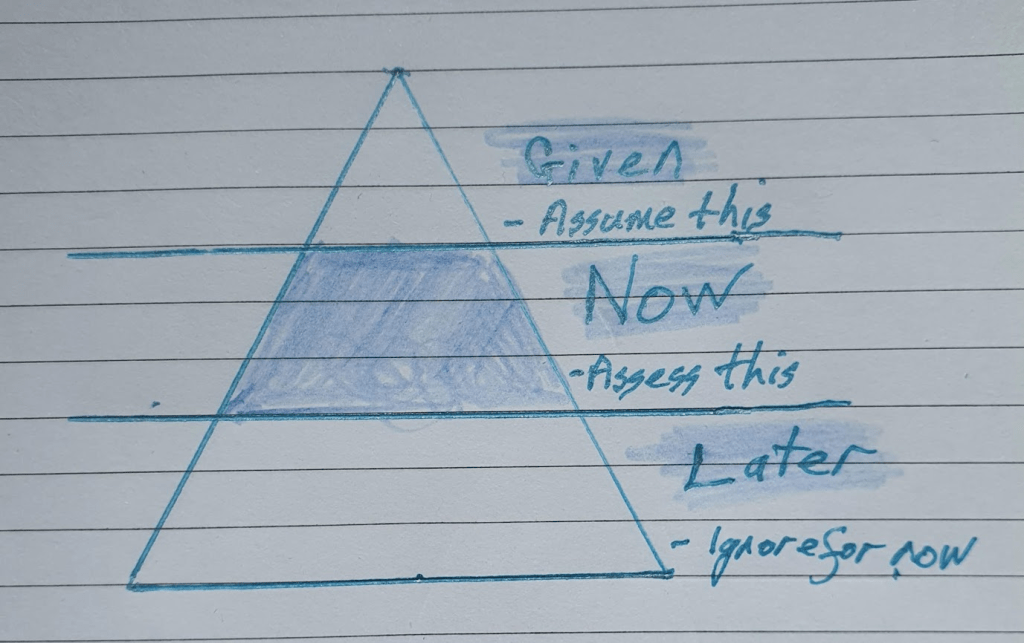

So what I need to decide before I start assessing is:

- What will I take as “a given”, which I will therefore not assess. For example, this could include the assumption that the team is working on the right goals, if I am assessing the way the team breaks down it’s backlog.

- What I will actually be assessing now, which is hopefully useful.

- What should be left until later? Perhaps because it is less important or because I can learn and apply something from a shorter assessment rather than assessing too much at once. The same way the team slice their stories into thin slices of value, I should slice my assessments into thin slices of useful information that can be acted on.

Cool – I know what I am assessing.

Assessment 2 – Is this really for the team?

I am feeling a little philosophical today, so let me ask a big, abstract question – Why do any assessment at all?

As with all things agile, if I say that something is valuable (and therefore potentially worth doing), then I should be able to say WHOM it is valuable to.

- Who actually gets the benefit of this work?

- What makes it valuable to them?

Potentially, I could share the assessment with any of the following people, who would gain some kind of benefit from me sharing it. Depending on who it is and what they want, the assessment I do might be quite different. I might assess the team:

- For the “team selector” who creates and maintains the team and wants information to support them to:

- Select who is on the team

- Select who on the team is assigned to a project, mission, game or training course;

- Assess people for promotion, bonuses, elite training; or

- Assess people to design a curriculum for training in areas needed, potentially customized to different needs

- For the “bill payer” who wants information to support them to:

- Understand whether the goals the bill payer has paid to achieve are actually being achieved;

- Understand where the costs in time and money or being consumed by the team;

- Influence what the team will strive for and what they will ignore or avoid

- For the people who are being assessed, in order to:

- Help the learning of those being assessed

- Create a sense of satisfaction and momentum

- Clarify and set goals and standards to aspire to

- Gain a certification that demonstrates their accomplishments and qualifications.

So that clarifies some things for me – the assessment should not be an end in itself but should be something that adds value to someone. Of course it could become too broad, if I aim to use the assessment to meet all the needs of all the people.

In the specific case that I am thinking of, I could do assessment for the coaches and managers to make decisions about what “curriculum” to create for teams, or I could do an assessment for the team members themselves to learn where and how to improve. Both might be valid goals but I it might be better to choose whether to optimize for one of those goals rather than hoping to kind of achieve both.

Maybe I should even have some user stories for my assessment:

This assessment will help (who) to do (what) so (there is some benefit); or

This assessment will provide (who) with (what insights, validations or information) so they can (make what decisions, or improve against what goals)

Says the coach, just before conducting the assessment

In this case I chose “This assessment will provide the (specific) team with insights about the way they work together so they can set better improvement goals for themselves.”

Defining a goal like this sets me up much better than saying “I will assess the team,” and even better than if I said “I have this health check so I guess that is what the team needs.”

So lets check-in. I definitely did some kind of assessment on a team. In fact I even knew who would get value and what that value should be. Finally I had a big triangle to wave around (or more accurately I was able to say what was given, for the purpose of the assessment, what I would focus on and what I would leave for later).

If I know this much, I should be good to go- but there is still the question of whether the specific assessment I perform will achieve my goal.

Assessment 3 – Is the assessment a good assessment?

Academics, scientists and quality freaks have done a lot of good work to help us define what a good assessment looks like. Let me list the key things I have taken away from the research, which I personally think define a good assessment.

You do not need to nail each of the following but you should define how important each is to you when you are doing your assessment.

Reliability

Will the assessment give the same results when conducted multiple times on multiple targets (or maybe “teams” is a better word)?

For example:

- If I assess a team multiple times, and they are still performing at the same level, would my assessment give the same result each time?

- Will my results vary depending on the time of day, where they are in their current sprint or the stage they are at in their quarterly rhythm?

- If I assess different teams, who are performing just as well as each other, but who are using slightly different tools and techniques, then will I get the same result?

- If there are multiple assessors, will the result depend on the assessor, or can people expect the same result from each assessor?

Since I was doing doing a single assessment on one team, for their own learning, I gave less attention to this factor. On the other hand if I had been assessing multiple teams at multiple locations to create a shared learning agenda across teams or a report on where to design coaching for multiple teams, then this becomes a lot more important.

Validity

Is the assessment actually measure what it is supposed to measure?

It is surprisingly easy to build measures that do not actually measure the right thing.

For example, if I say I am measuring quality of work or teamwork, but I measure velocity or speed, then this might not be correct. Speed might increase as a result of good team work or improved quality, but it might also increase because of less team work, reduced testing or building features without understanding quality from the users perspective.

Perhaps more subtly, if I am measuring “defects the team found and decided to fix” as a measure of quality, but I define quality as maintainability or user experience, then I am measuring the wrong thing, even if people see defect fixing as quality. Instead, perhaps, I should be measuring the ability to maintain the system or the experience of the user to assess, if I want to assess quality in these cases.

I might also use a measure that gives a false reading, even if it reliably gives the same false reading every time. For example, if pay rises are predicated on “displaying an agile mindset,” and I ask people, just before their pay review, whether they have an agile mindset; then I think that I will reliably receive the answer “yes”, regardless of the mindset being a fixed or growth one.

Validity was important for my assessment this time because the team will make decisions on the result. However there is a mitigation in that the team will debate my assessment as a group.

Acceptance

If reliability and validity make a measure or assessment useful, it is credibility that determines if it is actually used.

If people do not understand or accept the score, number, rating or opinion that comes out of the assessment, then they will not act on the results.

For example:

- I believe that “share of voice” is a good measure or team empowerment and effectiveness. This reflects whether everyone in the team gets to talk just as much as each other. However I have found that sometimes if I point out that only some people were speaking, team members explain to me why they think that was valid. They justify the rating rather than considering it as a thing to assess and maybe change. They may have a point, or I might be right, but either way it is a poor assessment if it will not be understood and used by the consumer; and

- When senior managers learn what velocity really measures, they often question whether it is a measure of team performance (which it is not). So while velocity is a good measure for the team to use in predicting what they can achieve, telling executives that the team is consuming stories at a good rate of points, they are likely to be more baffled than informed.

I have found this more problematic when an assessment is likely to challenge existing views and biases. If I rate a team as bad at cooking when they have a reputation for being great cooks, then people need a lot more convincing than if I confirm their existing views.

For the team I am assessing, I might want to make sure my assessment results are easy to understand and also credible.

Decision support and Educational Effects

So my assessment was (I believe) reliable enough, valid with some level of precision and accepted by the team.

However, if the team are going to learn from the assessment then it must be well designed to help them learn. This is again a matter of context. For example if I was a school teacher and I was students in a final exam (when they should know their material), then the assessment need not provide much support for student learning, but the education effect would be critical when I was using formative assessment during the semester.

In this case the assessment I am doing is literally designed for team learning, so the ability for the team members to apply the results to their learning is the most important criteria for success. This means my results must be simple, related to what the team wants to be good at, timely and helpful in clarifying a next step.

Some questions that help here are:

- Does this assessment help to clarify team goals or the goal of what we want them to do?

- Does the assessment make it easy to identify what was good, what could be improved and what to do next?

- Is the assessment timely – is the information still relevant and more importantly, provided in time to reflect on and change the habit, behaviour or output?

- Is the information provided simple, specific and clear? Or is it cluttered, overwhelming and vague?

Cost (or efficiency)

I could do an amazingly detailed assessment of the team, brining in multiple coaches equipped with video cameras, regression testing, fitness trackers and heaps of technology. I could even fly the whole team to a specialist lab in silicon valley somewhere, in order to participate and a herculean set of simulations.

Of course the cost of doing so would be far higher than the potential benefit to increasing performance.

The best assessment would be real time, created by the team themselves as part of the work, without any delay or other impact on what they are doing.

As much as possible, I like to create a way for the teams to better assess themselves in the moment of their work, rather than having me audit them.

In this case though, I spent some of my time (and theirs) in assessing them and communicating the result. So it was important to make sure that my assessment consumed just enough time to create useful lessons.

It is hard to know in advance, with certainty, whether the assessment will be worth doing, but we can make an educated guess. For example I know not to do a whole battery of tests if I know the main challenge for the team is that they have really bad retrospectives. I would be better off just assessing the retrospectives and then focusing more time on helping make improvements there.

What to take away from all this reflection

So, for me to assess whether any assessment I want to do is going to be worthwhile:

- I should know who needs something from the assessment and what they need;

- This should help me decide what I should take for granted, what to focus on in my initial assessment and what to ignore for now;

- I should design and assessment that is sufficiently:

- Reliable

- Valid

- Accepted

- Supportive of ongoing learning (and/or decisions)

- Cost (and time) effective

When I am done, I can assess whether I achieved these goals to “assess the quality of my assessment” and get better at assessing in the future.

What has been left out of this assessment of assessments?

I have not assessed whether the tam used what I shared or whether doing so proved useful to them.

Perhaps that is the next thing I should assess. Given I have shared some results, how useful did sharing them turn out to be?

With all this assessment of assessments, should I be able to do even better assessments?

Of course I hope to continuously improve my assessments. However sometimes when I think about what assessment to do I actually go the other way and drop the whole concept of assessing teams.

Instead, sometimes I will postpone my assessment and just observe for a little while longer without judgement. Sometimes curiousity is a better coaching tool that judgement, assuming that the curiousity leads to information that is shared with the team.

Then I might come up with a hunch, which leads me to a hypothesis, which leads me to wanting to conduct an experiment or some kind of assessment, in which case I am right back at the start of this article again.

Leave a comment